Semantic-Aware Generative Approach

for Image Inpainting

-

Deepankar Chanda

Texas A&M -

Nima Khademi Kalantari

Texas A&M

Abstract

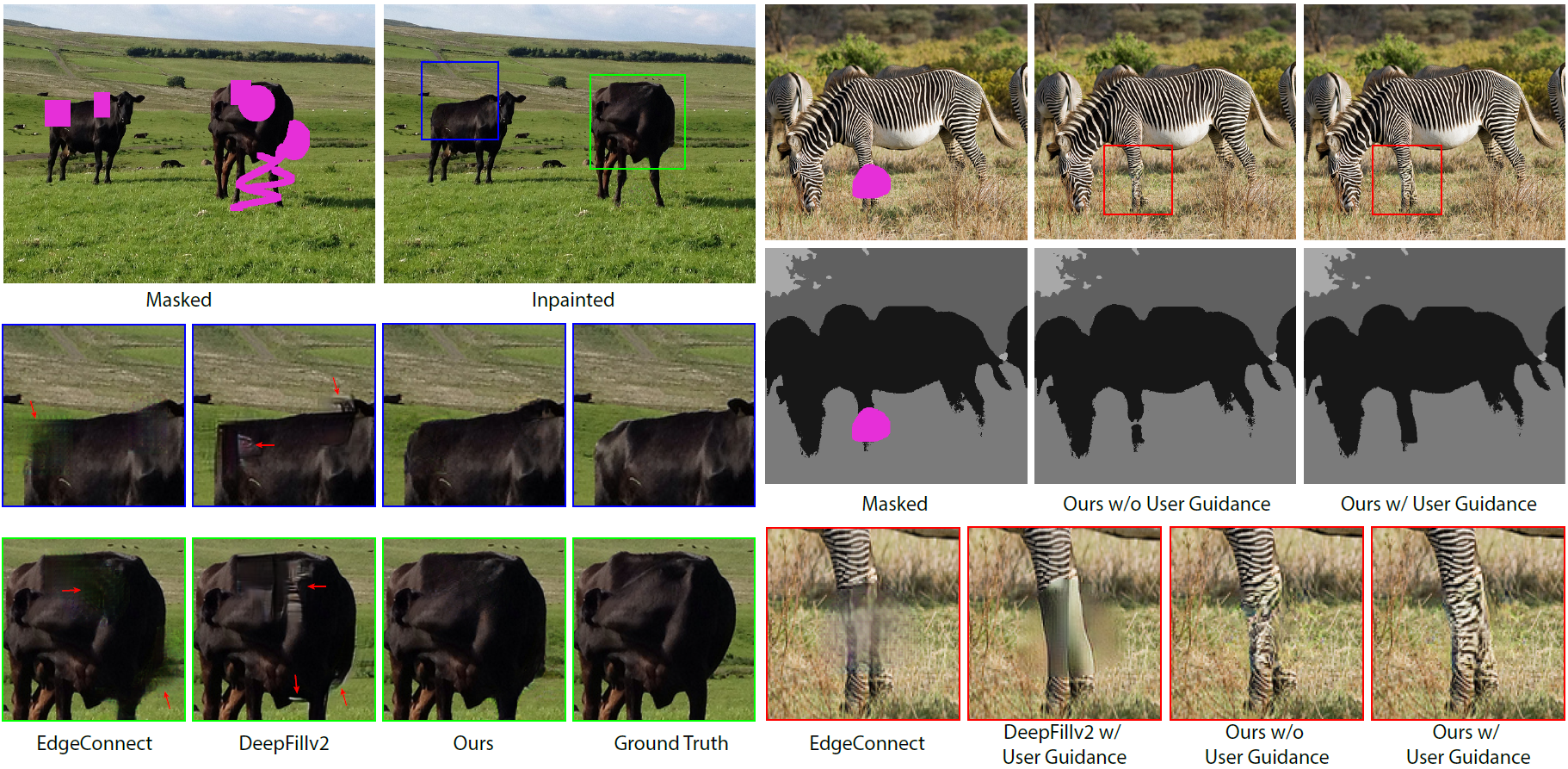

We propose a semantic-aware generative method for image inpainting. Specifically, we divide the inpainting process into two tasks; estimating the semantic information inside the masked areas and inpainting these regions using the semantic information. To effectively utilize the semantic information, we inject them into the generator through conditional feature modulation. Furthermore, we introduce an adversarial framework with dual discriminators to train our generator. In our system, an input consistency discriminator evaluates the inpainted region to best match the surrounding unmasked areas and a semantic consistency discriminator assesses whether the generated image is consistent with the semantic labels. To obtain the complete input semantic map, we first use a pre-trained network to compute the semantic map in the unmasked areas and inpaint it using a network trained in an adversarial manner. We compare our approach against state-of-the-art methods and show significant improvement in the visual quality of the results. Furthermore, we demonstrate the ability of our system to generate user-desired results by allowing a user to manually edit the estimated semantic map.

Conference Talk

BibTeX

@InProceedings{10.2312:sr.20211291, title={Semantic-Aware Generative Approach for Image Inpainting}, author={Deepankar Chanda and Nima Khademi Kalantari}, booktitle={Eurographics Symposium on Rendering}, publisher={The Eurographics Association} DOI={10.2312/sr.20211291} year={2021}}Acknowledgements

We thank the EGSR reviewers for their comments and suggestions. The website template was borrowed from Michaël Gharbi.