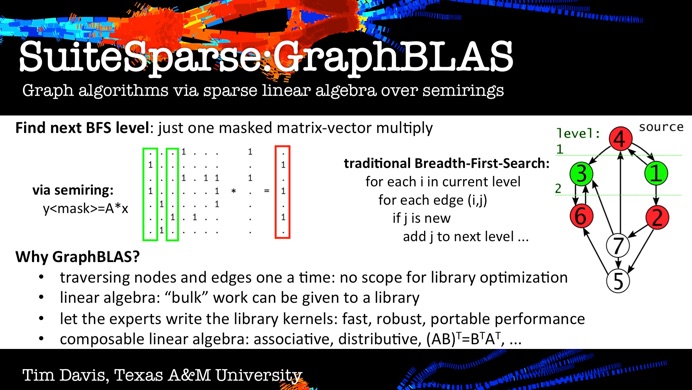

SuiteSparse:GraphBLAS is a full implementation of the GraphBLAS standard (graphblas.org), which defines a set of sparse matrix operations on an extended algebra of semirings using an almost unlimited variety of operators and types. When applied to sparse adjacency matrices, these algebraic operations are equivalent to computations on graphs. GraphBLAS provides a powerful and expressive framework for creating graph algorithms based on the elegant mathematics of sparse matrix operations on a semiring.

GraphBLAS is the engine inside RedisGraph, and appears as C=A*B in MATLAB R2021b and later.

Download the latest version, Now with OpenMP parallelism and a MATLAB interface:

https://github.com/DrTimothyAldenDavis/GraphBLAS

GraphBLAS also appears as part of SuiteSparse (typically at a slower release cycle than this page). This page includes the most recent update, and an archive of all prior versions.

See graphblas.org for the GraphBLAS community web page. Gábor Szárnyas maintains a list of GraphBLAS resources at https://github.com/szarnyasg/graphblas-pointers.

Papers on SuiteSparse:GraphBLAS:

-

•GraphBLAS User Guide (https://github.com/DrTimothyAldenDavis/GraphBLAS/blob/stable/Doc/GraphBLAS_UserGuide.pdf).

-

•T. Davis, Mohsen Aznaveh, Scott Kolodziej, Write quick, run fast: sparse deep neural network in 20 minutes of development time in SuiteSparse:GraphBLAS. IEEE HPEC’19, HPEC19.pdf.

-

•Mohsen Aznaveh, JinHao Chen, Scott Kolodziej, T. Davis, Tim Mattson, Bálint Hegyi, Gábor Szárnyas, Parallel GraphBLAS with OpenMP, CSC’20, under submission. CSC20_OpenMP_GraphBLAS.pdf.

-

•Tim Mattson, T. Davis, Manoj Kumar, Aydin Buluc, Scott McMillan, Jose Moriera, and Carl Yang. LAGraph: a community effort to collect graph algorithms built on top of GraphBLAS. GrAPL’19. lagraph-grapl19.pdf.

-

•IEEE HPEC’18 paper: Graph algorithms via SuiteSparse:GraphBLAS: triangle counting and K-truss, T. Davis, 2018. Davis_HPEC18.pdf.

-

•P. Cailliau et al., "RedisGraph GraphBLAS Enabled Graph Database," 2019 IEEE International Parallel and Distributed Processing Symposium Workshops (IPDPSW), Rio de Janeiro, Brazil, 2019, pp. 285-286. https://doi.org/10.1109/IPDPSW.2019.00054 or 08778293.pdf

-

•Algorithm 1000: SuiteSparse:GraphBLAS: graph algorithms in the language of sparse linear algebra, T. Davis, ACM Trans. on Mathematical Software, 2019. toms_graphblas.pdf

Older versions: click here for the GraphBLAS archive.

Talks on GraphBLAS and RedisGraph are linked below. RedisGraph+GraphBLAS is the topic of the first 7 minutes, 20 seconds of the first talk. The second talk is longer, and is only on RedisGraph and GraphBLAS.

RedisGraph v1.0 released: Nov 15, 2018

“By representing the data as sparse matrices and employing the power of GraphBLAS (a highly optimized library for sparse matrix operations), RedisGraph delivers a fast and efficient way to store, manage and process graphs. In fact, our initial benchmarks are already finding that RedisGraph is six to 600 times faster than existing graph databases!” (caveat: the TigerGraph results on the blog will be updated soon).

LAGraph: a library of graph algorithms that rely on GraphBLAS:

LAGraph is a new collaborative effort to develop graph algorithms that rely on GraphBLAS. You can download the package at https://github.com/GraphBLAS/LAGraph.

Acknowledgements: SuiteSparse:GraphBLAS would not be possible without the concerted and long-term efforts of the GraphBLAS.org community -- in particular, the GraphBLAS C API Specification Committee: Aydın Buluç (LBNL), Tim Mattson (Intel), Scott McMillan (CMU), José Moreira (IBM), and Carl Yang (CMU), and the GraphBLAS Steering Committee: David Bader (Georgia Tech), Aydın Buluç (LBNL), John Gilbert (UCSB), Jeremy Kepner (MIT Lincoln Lab), Tim Mattson (Intel), and Henning Meyerhenke (Humboldt Univ. Berlin).

I would also like to thank MIT Lincoln Lab, NVIDIA, Intel, Redis Labs, IBM, and the National Science Foundation for their support.

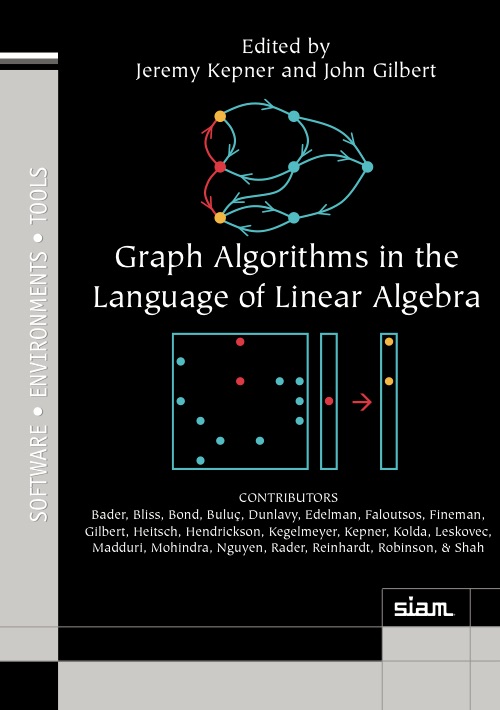

The mathematical foundation of GraphBLAS is the topic of the book, Graph Algorithms in the Language of Linear Algebra, Edited by Jeremy Kepner and John Gilbert, SIAM, 2011, part of the SIAM Book Series on Software, Environments, and Tools.

SuiteSparse:GraphBLAS was developed with partial support from NSF grant 1514406.

Developed with support from NVIDIA, Intel, MIT Lincoln Lab, Redis Labs, IBM, Julia Computing, and the National Science Foundation.